AI-driven code generation is no longer a distant vision—it's rapidly becoming a linchpin for high-performance computing and deep learning. In the relentless pursuit of speed, efficiency, and adaptability, agentic reinforcement learning (RL) is emerging as a transformative force for automating complex code optimization tasks that once demanded years of human expertise. The recent advances demonstrated by CUDA Agent—a large-scale agentic RL system for CUDA kernel generation—signal a paradigm shift not only for HPC practitioners, but for every enterprise seeking a competitive edge through AI-powered automation.

From Manual Tuning to Autonomous Code Synthesis

Traditionally, optimizing CUDA kernels—the backbone of GPU-accelerated workloads—required painstaking manual engineering. Developers would iterate through countless parameter tweaks, code rewrites, and benchmarking cycles just to eke out modest performance gains. This process was not only time-consuming but also limited scalability, especially as models and datasets ballooned in size.

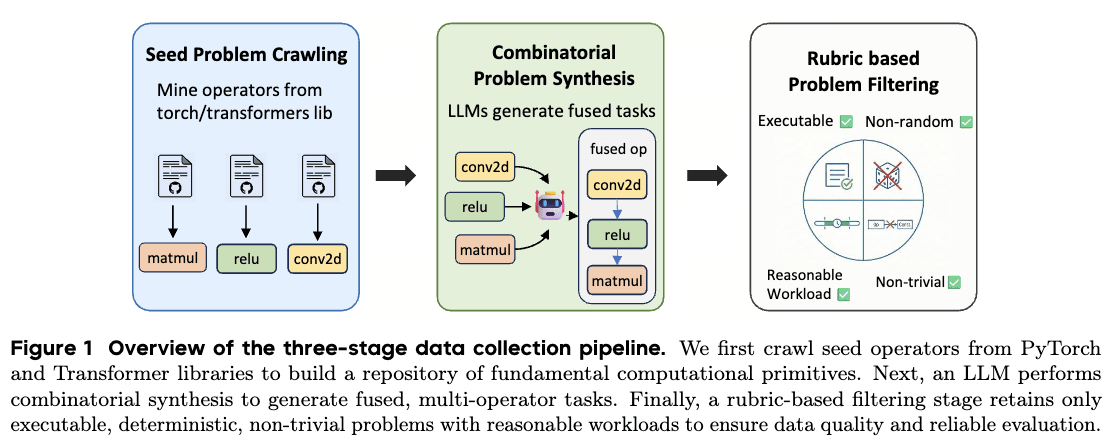

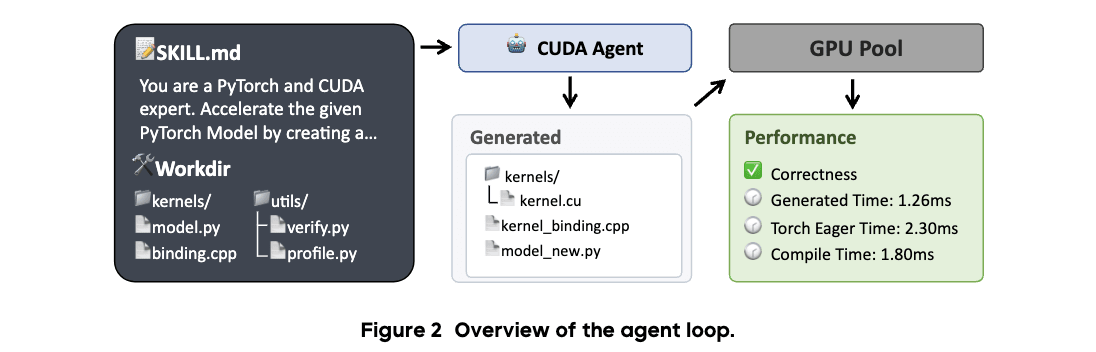

Enter agentic RL: a new breed of intelligent agents capable of autonomously exploring vast code spaces, synthesizing diverse training tasks, and learning optimal strategies through trial and error. The CUDA Agent system, developed by ByteDance Seed and Tsinghua University, exemplifies this shift. Leveraging a scalable data synthesis pipeline and a skill-augmented execution environment, CUDA Agent can generate and refine high-performance CUDA kernels without human micromanagement.

According to CUDA Agent's official results, the system synthesized over 6,000 unique training operations, providing a rich and diverse landscape for learning robust optimization skills.

Scaling Reinforcement Learning for Real-World Impact

While RL has long shown promise in controlled settings, its impact on production-scale code generation has been limited by issues of stability, generalization, and compute cost. CUDA Agent addresses these barriers head-on. Its scalable data pipeline feeds the RL agent with a continuous stream of synthesized tasks, ensuring exposure to a wide spectrum of kernel structures and performance bottlenecks.

This approach enables what AI researchers call long-horizon training—where agents not only learn short-term tricks, but develop enduring strategies that generalize to unseen workloads. The CUDA Agent environment leverages a feedback loop that tightly couples code generation, execution profiling, and reward computation, producing stable and meaningful learning signals across thousands of iterations.

These design principles echo best practices advocated by leading AI research groups, such as DeepMind and OpenAI, who emphasize the importance of diverse training data and skill-augmented environments for robust code synthesis (DeepMind, 2024).

Benchmarking Autonomous Kernel Generation: The Evidence

Numbers matter—especially when evaluating claims of AI-driven performance breakthroughs. CUDA Agent delivers on its promise with compelling empirical results:

- 98.8% overall pass rate on the KernelBench benchmark, demonstrating reliability across a broad spectrum of tasks.

- 96.8% of generated kernels outperform torch.compile, a state-of-the-art PyTorch kernel optimizer.

- 2.11x average speedup compared to torch.compile, representing a step-change in real-world throughput.

These outcomes are not just incremental improvements—they suggest that agentic RL systems are ready for mainstream deployment in performance-critical environments. As highlighted in a 2024 McKinsey report, organizations that embed advanced automation into their core workflows realize up to 30% efficiency gains over competitors relying on manual processes.

For enterprises in sectors like autonomous vehicles, scientific computing, and AI infrastructure, these results indicate a clear path to sustainable competitive advantage.

Beyond Speed: Building Robust, Adaptive AI Agents

While raw speed is impressive, the real breakthrough lies in adaptability. CUDA Agent's architecture demonstrates how agentic RL can be extended beyond narrow task optimization to develop generalizable problem-solving capabilities. By continually synthesizing new training ops and iteratively refining its skill set, the system adapts to novel kernel patterns, hardware configurations, and evolving application needs.

This adaptability is especially valuable as organizations embrace heterogeneous computing environments—mixing CPUs, GPUs, FPGAs, and custom accelerators. According to Gartner's 2025 AI and automation trends, more than 70% of enterprises will deploy multi-architecture AI pipelines by 2027, amplifying the need for agile, self-optimizing code generation frameworks.

At Jina Code Systems, we've observed firsthand how these agentic approaches accelerate the development and deployment of intelligent digital systems—enabling faster iteration cycles, higher system reliability, and lower operational costs.

Real-World Applications: From HPC to AI Agents at Scale

The implications of large-scale agentic RL for CUDA kernel generation extend far beyond academic benchmarks. In the real world, organizations are harnessing these advances to:

- Automate performance tuning for deep learning workloads, reducing the time-to-market for new AI models.

- Optimize simulation and rendering pipelines in scientific computing and digital content creation.

- Drive cost savings in cloud and edge environments by minimizing compute resource waste.

- Empower AI agents to self-improve their execution logic, paving the way for autonomous software engineering at scale.

For example, leading cloud providers have begun integrating RL-based kernel optimizers to dynamically tune infrastructure for varying customer demands—a move projected to save millions in operational expenses annually (TechCrunch, 2025).

These trends underscore the urgency for technology leaders to invest in agentic automation platforms—not only to stay competitive, but to unlock new forms of digital innovation previously out of reach.

Conclusion

As the boundary between software and intelligent agents continues to blur, agentic RL systems like CUDA Agent are setting a new standard for autonomous code optimization and AI infrastructure engineering. The leap from manual tuning to adaptive, self-improving code is no longer theoretical—it's already delivering measurable value across sectors. For enterprises ready to seize this opportunity, the next step is clear: invest in AI-driven automation frameworks that can learn, adapt, and scale with your business needs.

At Jina Code Systems, we specialize in architecting and deploying agentic AI solutions that empower organizations to operate smarter and innovate faster. If your team is looking to accelerate digital transformation through intelligent automation, explore our latest insights or connect with our experts today.