In the not-so-distant past, cutting-edge AI research was the domain of brilliant individuals, late nights, and the occasional whiteboard epiphany. Today, that paradigm is shifting at breakneck speed. Autonomous AI agents—capable of self-improvement and relentless experimentation—are rewriting the rules of what progress looks like in machine learning. If you think this is science fiction, think again. Projects like karpathy/autoresearch are already demonstrating how an agent, left to its own devices overnight, can iterate code, run experiments, and deliver results faster than any human team could dream of. The implications for technology leaders and enterprise developers are profound—and disruptive.

From Human-Centric Research to Agentic Autonomy

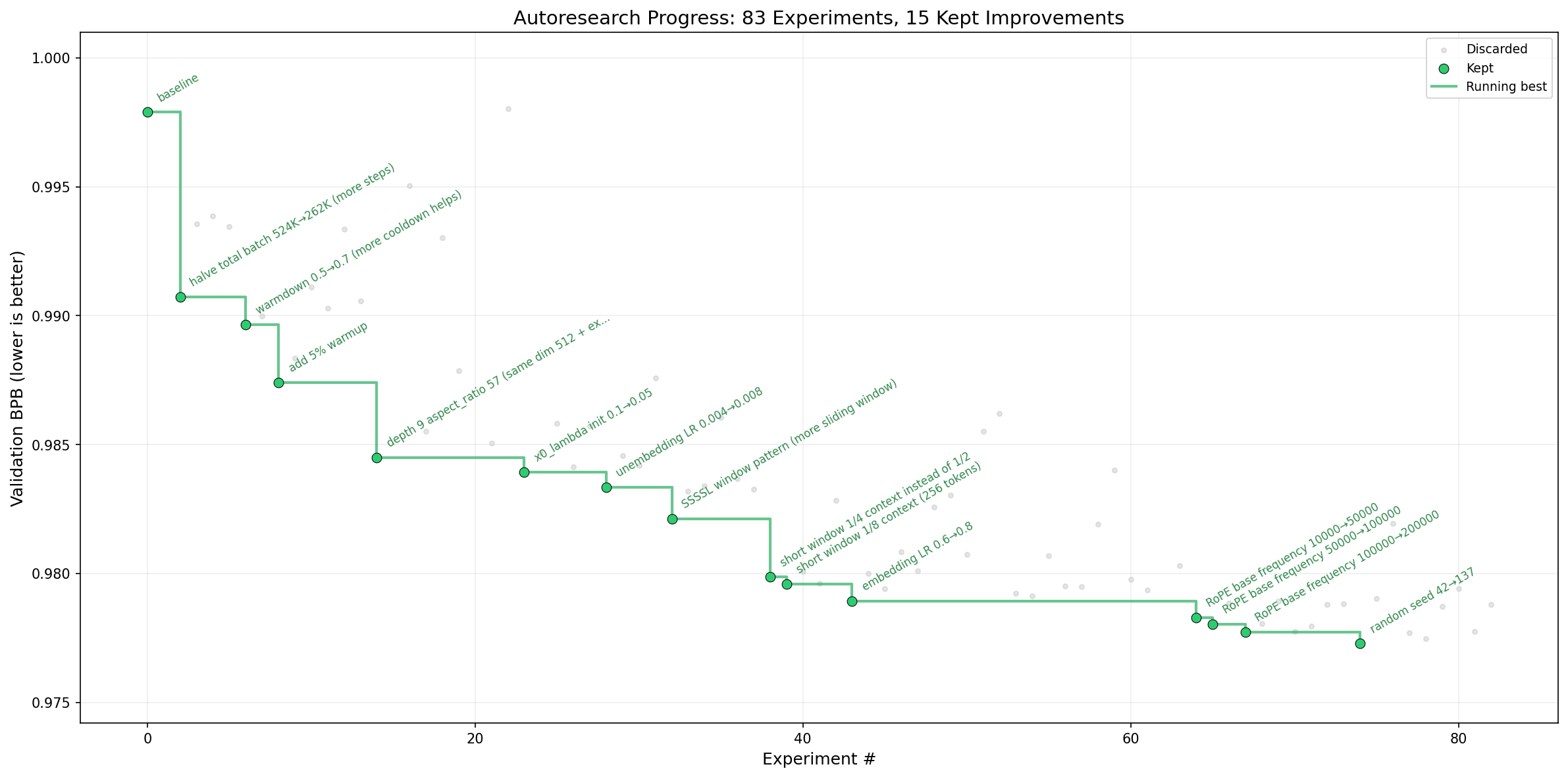

The classic model of AI research—where humans write code, run experiments, and gradually refine models—has hit a wall. Human cycles are slow, error-prone, and inherently limited by our need for rest and coordination. By contrast, autonomous AI agents operate without these constraints. As outlined in karpathy/autoresearch, a single agent can repeatedly modify a core training script, run a five-minute experiment, and instantly determine if a change yielded an improvement. Over the course of a night, this yields hundreds of experiments, each building on the last.

This approach mirrors the agentic workflows emerging across the industry. According to Gartner's 2025 AI forecast, more than 60% of new platform R&D initiatives will incorporate AI-driven automation by 2027, up from just 15% in 2023. Agents don't just speed up the process—they fundamentally change its nature. They can optimize model architectures, tune hyperparameters, and even suggest novel training regimes, all without human micromanagement.

- Continuous experimentation: Agents conduct dozens of trials per hour

- Objective evaluation: Each change is benchmarked using consistent, platform-agnostic metrics (e.g., validation bits per byte)

- Human-in-the-loop oversight: Researchers guide the direction but aren't bottlenecked by manual iteration

Reproducibility and Efficiency: The Hidden Edge of Autonomous Research

One of the chronic pain points in AI research is reproducibility. Differences in compute, code drift, and undocumented tweaks can make it maddeningly difficult to replicate results. The autoresearch approach addresses this head-on. By enforcing a fixed time budget for experiments (5 minutes per run) and confining agent modifications to a single script, outcomes become directly comparable—regardless of model complexity or architecture changes.

This design philosophy delivers two major benefits:

- Platform consistency: Results are meaningful within the constraints of your hardware, eliminating apples-to-oranges comparisons that plague distributed training setups.

- Resource efficiency: Experiments are bounded, allowing for predictable use of compute and energy—an increasingly critical concern as organizations scale their AI investments.

According to a 2024 McKinsey survey, enterprises that standardized experimentation cycles and automated evaluation saw a 2.3x increase in model deployment velocity versus those relying on manual processes. The message is clear: agentic automation isn't just about speed, but also about reliability and scaling best practices.

Scaling Down: Making Autonomous Research Accessible

While the vision of swarms of agents running on cloud megastructures conjures images of hyperscale innovation, the reality is that autonomous research can—and should—be democratized. The autoresearch project deliberately keeps its architecture lean: a single GPU, a single modifiable script, and minimal dependencies. This design lowers the barrier to entry for smaller organizations, startups, and even individual developers.

Notably, the community has already adapted the approach for diverse platforms—from MacBooks to Windows RTX devices—by tweaking hyperparameters and using lighter datasets such as TinyStories. This modularity enables rapid experimentation at any scale. As Jina Code Systems has observed in client projects, allowing teams to run local, bounded experiments with agentic oversight accelerates innovation without the need for massive infrastructure investments.

- Adaptable for smaller hardware: Hyperparameters and datasets can be tuned for resource-constrained environments

- Community-driven forks: Open-source collaboration expands platform support and best practices

The Organizational Playbook: Engineering AI-Driven Research Pipelines

Adopting autonomous agents isn't just a technical shift—it requires an organizational mindset change. Instead of researchers tinkering with every line of code, teams define high-level objectives and constraints in configuration files (such as program.md). Agents then execute within these guardrails, reporting back on progress and surfacing novel solutions for human review.

According to Deloitte's 2025 AI in Business study, organizations that empower agentic workflows report a 40% reduction in time-to-insight for R&D initiatives. This hybrid paradigm—agents for execution, humans for direction and oversight—is rapidly becoming the standard in AI-driven enterprises.

Key ingredients for success include:

- Clear experiment protocols: Define metrics and constraints up front

- Auditability: All agent actions and code changes are logged for transparency

- Iterative improvement: The research "codebase" evolves alongside the models themselves, fostering a culture of continuous learning

Real-World Impact: Autonomous Agents in Action

These agentic research loops are no longer hypothetical. Enterprises in sectors from pharmaceuticals to fintech are leveraging autonomous agents to turbocharge discovery. For instance, DeepMind's AlphaFold used self-optimizing systems to crack protein folding, a challenge that stumped human researchers for decades. Closer to the enterprise AI stack, Microsoft Research has piloted agent-driven experimentation platforms that accelerate model selection and tuning by over 10x.

By 2027, enterprises using autonomous AI agents in R&D will deliver new models to production 30% faster and with 50% fewer manual interventions. — Gartner, 2025

At Jina Code Systems, we have helped clients implement agentic pipelines that reduced experiment cycle times from days to hours, while maintaining rigorous reproducibility and model governance.

Conclusion

The era of autonomous AI research is upon us—and those who embrace agent-driven workflows will outpace their competitors in velocity, reliability, and scale. Whether you're running experiments on a cloud cluster or a desktop GPU, the underlying principles are the same: let agents do the heavy lifting, so humans can focus on strategy and oversight. As the industry races toward agentic automation, now is the time to rethink your R&D playbook. For organizations ready to design, build, and scale intelligent agent-driven systems, Jina Code Systems stands ready as a partner to help you unlock the next wave of AI-powered innovation.